ingram59

Established Members-

Posts

30 -

Joined

-

Last visited

ingram59's Achievements

Newbie (1/14)

0

Reputation

-

ingram59 started following Tracking down account lockout , Windows 2016 LDAP problem with Savin printer , Application not present is Software Center on some computers and 7 others

-

application management Windows 2016 LDAP problem with Savin printer

ingram59 posted a question in Active Directory

For this issue I have TWO Windows 2016 servers on different networks, in the same domain. One server is on site the other is hosted in a vendor cloud. I have full access to both servers. There is NOT firewall blockage causing the issue I will describe. I can telnet to both from my workstation and I can successfully run the LDP tool to both servers and successfully bind and connect using the correct credentials. Here is the issue. I set the SAVIN MFP to create an LDAP connection to our on site 2016 server. It works as expected. I change ONLY the hostname to point to the cloud 2016 server. When I test that connection I get "Invalid Credentials(49)" in the System Log on the SAVIN. Traffic is going to the cloud hosted server. The System Log in Active Directory on that server shows, "An account was successfully logged on." for the LDAP id that I am using. However I get 'connection failed' on the Savin when I test. All pertinent suggestions are welcome-

- mandatory profiles

- wondows 10

-

(and 36 more)

Tagged with:

- mandatory profiles

- wondows 10

- cb1910

- sccm

- cross-forest

- multi-domain

- sccm

- cmg

- azure

- sup

- client

- sccm

- dp

- office 365 updates

- query or collection in sccm

- gpo

- sccm cb

- database

- sccm cmdlets

- sccm client

- powershell

- wmi

- sccm

- client settings

- console

- dell

- 7212

- imaging

- osd

- defaultuser0

- configmgr

- sccm

- admin tools

- current branch

- manageengine

- patch connect plus

- configuration manager

- non microsoft updates

-

I have collections for computers on each floor in our building. They are correctly populated. Multiple packages have been created and successfully deployed over the last couple of years. I have a new package that I have created and deployed to all floor collections. I've used both "Available" and "Required" in the deployments that I've attempted. We are getting a ~50% deployment success across the building. I've spot checked some of the computers that did NOT receive the application. I confirm that the SCCM agent is installed and functioning properly. However the app. is NOT present in Software Center. I've tried multiple deployments. The result is repeatable. On the machines in question, the SCCM agent is correctly installed and Software Center shows other packages, just not the one in question. It is set for immediate availability. Cache is not full, EXECMGR and CCMEXEC logs don't show errors that indicate to me that there should be a problem. The package information is not showing up in the logs. Assistance is greatly appreciated. Dino

-

Steps and the Problem Requiring a Solution: GPO configuration is listed below: Printers on a given floor have been successfully delivered to users' desktops on their respective floors, initially based on IP range in Item-Level targeting. However, all floor printers are not needed by all users on the given floor. I want users to only see the printers on their floor that are designated for their specific departments. (ie: LEGAL and ACCTG. departments are on the same floor. Only LEGAL staff should see LEGAL printers and ACCTG. staff should only see ACCTG. printers). I DO NOT want to make LOTS of floor-specific GPO's. Simply one per floor using Item-Level targeting to control delivery. I refine that item level targeting by adding a department's USERS OU for the respective printers. When I do this, the printer shows up or stays on the properly targeted computers. Here is the problem: I want to REMOVE the LEGAL printers from the desktops of the ACCTG. staff and vice versa. 1st round of GPO settings for initial printer delivery: Environment and Settings: GPO delivering a user-configuration printer General: Action - Update Common: Item-level targeting IP address range for floor. 2nd Round of GPO Settings to isolate printers by departments: Environment and Settings: SAME GPO delivering a user-configuration printer General: Action - Update (forced by "common" option) Common: (Selected) Remove this item when it is no longer applied Item-level targeting IP address range for floor AND Users OU for the department receiving the printer. Testing Results: After running a GPUPDATE /FORCE with 1st group of settings, the printers deliver as expected and they do not show up on computers where they are not needed. However, when I run the GPUPDATE /FORCE AFTER changing to the 2nd group of settings and allow setting to replicate, the unwanted printers ARE NOT removed from the users' desktop to whom they don't apply. I need to be able to clean up those printers but am not sure how to do it beyond what I have tried.

-

I didn't see a category for this so I'm posting it here. Current Environment: I have four Windows 2012 R2 servers configured for DFS and two namespace servers (DC's). I'm currently using DFS and NOT replication. I have numerous namespaces, each with only ONE FOLDER TARGET. I have between 1 and 2tb of space on each DFS server. I need to migrate a large high level subdirectory and its content (800gb appx) FROM one of these servers TO a fifth DFS Windows 2012 R2 server in order to more evenly distribute content and balance drive sizes. I need to do this without risking data content or integrity and keeping all content current and making the process transparent to the user community. I want to use the replication capability of DFSR? However, first I have some questions based on the following testing that I completed. TESTING: I added a fifth DFS server. I created FolderA on Server4 of my DFS servers, and created a new Namespace1 with a folder target pointing to \Server4\FolderA. It worked fine. I then set up the FIRST REPLICATION group using Server4 and Server5 with Server4 as PRIMARY pointing to FolderA (\\SERVER4\FOLDERA) and Server5 as the replication partner using FolderB. (\\SERVER5\FOLDERB) I DID NOT ADD another folder target to Namespace1. I would then open the namespace (\\dfs\namespace1), pasting files to it. Based on my configuration I anticipated that files would ALWAYS be dropped in \\Server4\FolderA and REPLICATED to \\Server5\FolderB, since my FOLDER TARGET was on Server4. However while monitoring the folders on BOTH servers I got mixed results. Sometimes the pasted content showed up FIRST on \\Server5\FolderB and then quickly replicated to \\Server4\FolderA. Other times it would show up on \\Server4\FolderA and replicate to \\Server5\FolderB. It appears that replication puts content in either one of the replicated folders, IGNORING the Folder Target that was defined. QUESTIONS: With replication configured between two servers is A SINGLE FOLDER TARGET ignored with data going to EITHER location or was I seeing a false result due to the refresh timing of the folders? (I had remote desktops open on BOTH servers and was monitoring the FolderA on Server4 and FolderB on Server5) What is the best approach to take to migrate the date to the new server? here is my thought. Please provide input or corrections. Steps: Pre-seed content with Robocopy or a data restore to Server5. Set up replication using the "Replication Group for Data Collection" option Allow content to replicate In the late afternoon, add a new Folder Target (Server5\FolderB) to Namespace1 Delete the ORIGINAL Folder Target (Server4\FolderA) from Namespace1 Delete the Replication Group This is my thought. What am I missing? And what will happen to replicated content if I use the steps as shown? I've had strange things occur with Replicated content if it isn't done correctly (the date disappears) Thanks in advance for your time and input. Dino

-

Please read CAREFULLY before replying. I tried to be concise in my testing description. I need this resolved and I've hit a dead end and need some recommendations. I have two domains, A and B that have two-way transitive trusts. I need the computers in DomainA to connect to DFS shares in DomainB OU1 and OU2 are at the same level and inheriting policies. OU2 has a sub-ou OU3 Both Computers and Users are in DomainA and DFS shares are in DomainB I've done the following testing and CAN'T, for the life of me understand my users can't connect to DFS from the problematic OU. My testing was an attempt to rule out user rights and narrow it down to a GPO issue, but I still can't find the culprit. I ruled out USER rights issues by flipping users and computers. Below is how everything played out. User1 on Computer1 in OU1 (won't connect to DFS) User2 on Computer1 in OU1 (won't connect to DFS) User1 on Computer2 in OU1 (won't connect to DFS) User2 on Computer2 in OU1 (won't connect to DFS) User1 on Computer1 in OU2 (Connects to DFS) User2 on Computer1 in OU2 (Connects to DFS) User1 on Computer2 in OU2 (Connects to DFS) User2 on Computer2 in OU2 (Connects to DFS) User2 on Computer2 in OU3 with policy inheritance (Connects to DFS) User2 on Computer2 in OU3 with BLOCKED inheritance and linked GPO's from OU1 (Connects to DFS)

-

Please read the full posting before answering. I've searched extensively for a GPO fix to my issue but I can't find one. I've got a lot of users that work wit IE. Under Internet Options / Programs / Default Web Browser there are two options, "Tell me if Internet Explorer is not the default web browser" and "Make default". Both are grayed out on some machines and of course, cannot be modified. On other machines the "Tell me...." option is active and can be checked and the "Make Default" is grayed out. Machines with both conditions have the SAME group policies applied to them. I have a PS script on my DC allowing me to search GPO's for specific content. However I don't know what to search for in order to find the GPO that has these settings in them. Google searches have proved fruitless in finding the GPO options that need to be changed. I'm less concerned about the "Make Default" than I am about the "Tell me...." option. Where are these settings located and what are they titled? If they are not specific settings, what registry changes can I push that allows me to activate the "tell me..." option in IE? Also, why, if the same policies are being applied (meaning they are not being blocked by security filtering), are the settings on these machines different. This is extremely frustrating and I'm really looking for a solution to this. Thanks in advance for your assistance.

-

- internet explorer

- gpo

-

(and 1 more)

Tagged with:

-

Computer not showing up in collection

ingram59 replied to ingram59's topic in Configuration Manager 2012

One addition. It does appear that after I reboot the SCCM server the device DOES NOW appear in the collection AND the Content status properly updates and is available. However, a REBOOT is REQUIRED every time a change like this is made. That WAS NOT and SHOULD NOT be the case. -

Here is the scenario. Please read the entire post before responding. Sccm 2012 recently updated to the latest release. Device collection with a Query rule to retrieve computers based on a certain condition. Collection and query rule built prior to the upgrade. Collection limited to All Systems I create a Direct Rule under the Membership Rules for that collection and add a device. I select the device and it does show up in the Membership Rules After closing the window, refreshing the collection, Updating Membership AND waiting overnight, the member count DOES NOT change and my direct add machine DOES NOT appear in the collection I go back in to the collection Properties / Membership Rules and it IS listed there as the Direct entry, along with my Query rule that was previously added The device in question is present in All Systems (it is my workstation) 2nd testing step Created a BRAND NEW TEST COLLECTION Did a direct add of my machine Collection shows zero entries following update and refresh Added two other random machines Collection shows zero entries following update and refresh Rebooting the sccm server has no impact. This is only one of the symptoms of some issue indicating that it is NOT just collection related. Another symptom relates to updating distribution points after adding new drivers. The update completes but the date and time stamp on the Content status DOES NOT reflect the update that was executed. Even when waiting overnight. Your assistance is greatly appreciated.

-

The issue I'm describing is VERY FRUSTRATING. We are decommissioning a proxy server yet our firewall is still showing windows 7 computers hitting the proxy server. Here are the steps that I've taken and what I've looked at on one of the computers in question. DESELECTED "Automatically Detect Settings" Set "Use automatic configuration script" to reference the CORRECT proxy server that is currently in use DESELECTED AND CLEARED the entry under the "Proxy Server" entry for "Use a proxy server for your LAN......" Fields are blank and UNCHECKED Edited the registry and removed ALL references to the proxy server that we DO NOT want to reference (the one being decommissioned) both IP address and the hostname that references the proxy server. Changed the one installed application to point to the correct proxy server. Flushed the DNS cache on the workstation. With ALL these steps taken, can someone tell me where to look or tell me why the machine is still trying to reach out to the proxy that we're decommissioning?

-

That doesn't make senses to me. If you look at the 2nd screen shot, the computer name is different. The "Computer" listed is the machine that I was on when I viewed the event log. I did this on a member server and on a DC.

-

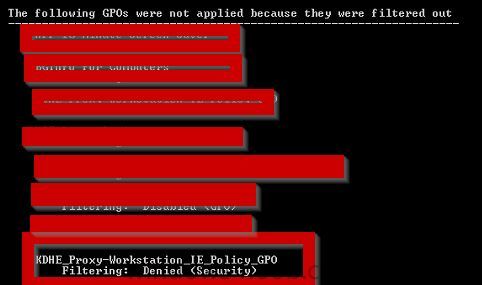

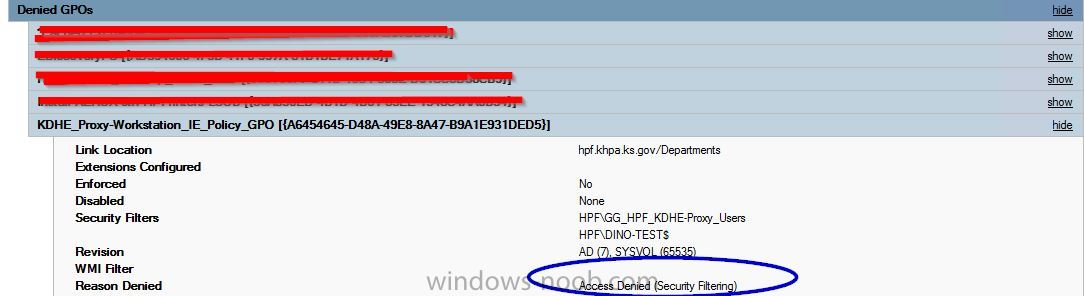

I have a GPO containing User and Computer settings. It is 'enabled' in the Details tab and 'Authenticated Users' was added under the Delegation tab. It is linked to the proper OU's and the Security Filtering contains the group containing members needing the GPO. Also for added measure, Domain Computers was added in the Delegation Tab. However, the policy is not applying, even with a reboot, and I HAVE given it adequate time to replicate. When I run a GPUPDATE /Force and then a GPRESULT /r from the command line on my test workstation, logged in as my Domain Admin account, the policy does not show up and does not appear in the HTML file when I create it using GPRESULT. This machine is in an OU for which the GPO is applied. When I run the GPO Modeling Wizard, the policy shows up as Denied... Access Denied (Security Filtering) See attached screen shot. How do I get this policy to apply the the computer and associated user when policy refreshes. I've attached two screen shots:The results of the modeling wizard: and the RSOP run from 'elevated' command line: This is time sensitive. I'm needing input as soon as reasonably possible. Thanks in advance for your input. Thanks, Dino

-

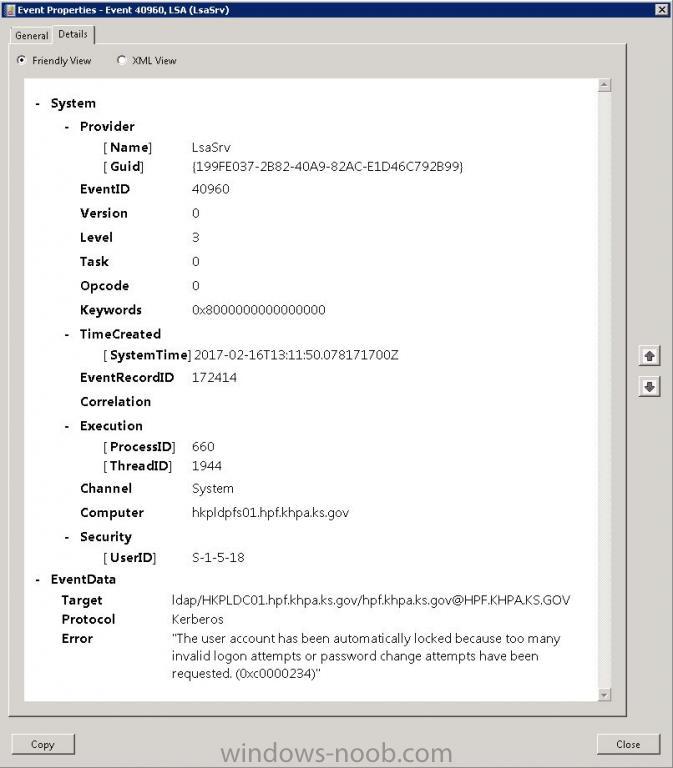

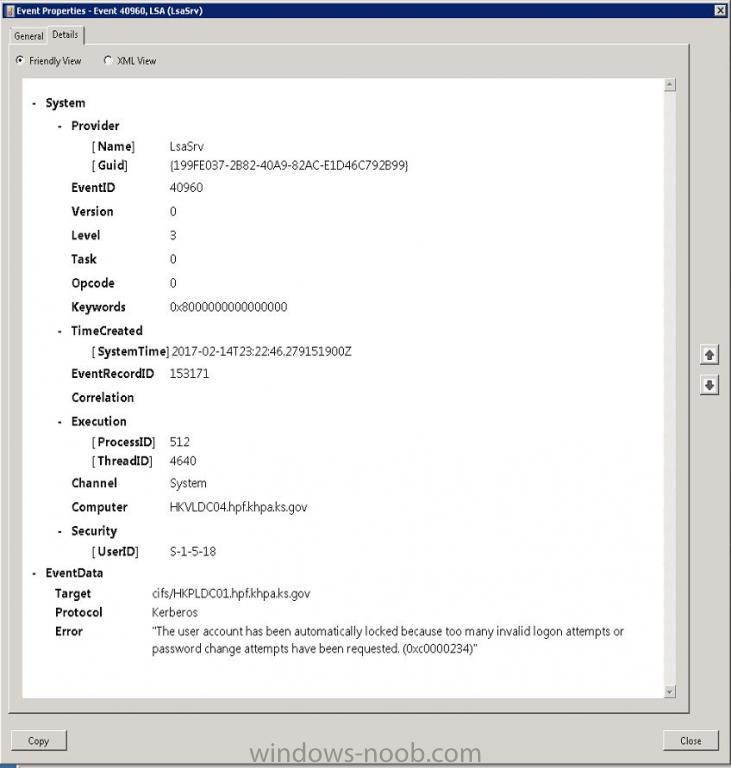

I have a Domain Admin account in one of our domains containing six DC's. My account is being locked out on a DAILY basis. I don't use that account to run any services. I'm attaching screen shots of the messages. They are nondescript from the standpoint of directing me to the server or computer that is generating the lockouts. How can I track down the machine that is locking out my account? Event log screen shots attached.

-

I Need to apply a User GPO to only specific users in an OU

ingram59 posted a question in Group Policy

I've created a User GPO to map a drive for users in a SUB-OU who need it. The GPO is linked to the user SUB-OU for the department in question. There are about 50 users in that SUB-OU and I only need the GPO to apply to about ten of them. I created a Global Security group with only those ten users and added it to Security Filtering for the GPO thinking that would work. However the GPO refuses to apply unless I leave Authenticated Users in Security Filtering. (Authenticated Users is now required for applying User GPO's). In this scenario, the mapping then applies to ALL users in the linked SUB-OU and not simple to the ten users in the security group that I created. How do I get the GPO to apply to the SUB-OU but still only set the mapping for the few users who need it? I don't want to make another SUB-OU just to accommodate drive mapping for these users since this is a process that will have broader implications for other department. -

Sccm 2012 - Assistance with software uninstall

ingram59 replied to ingram59's topic in Configuration Manager 2012

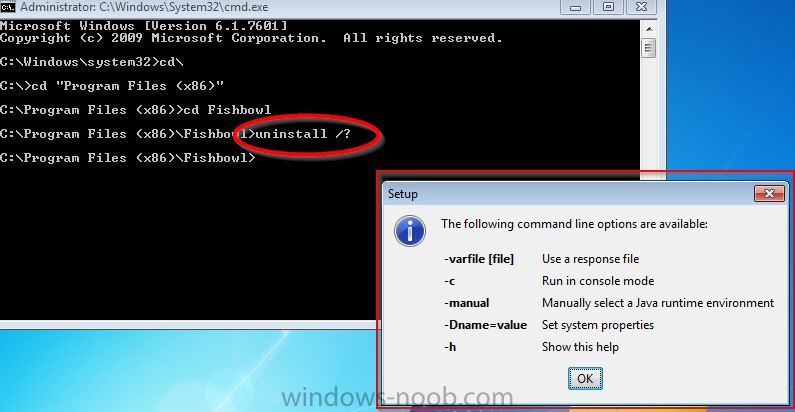

I appreciate the feedback so far. The installer and the uninstaller are VERY POORLY WRITTEN. There are no unattended or silent flags to use for the removal. Also, I already looked at the link in this thread and ran it. The application does not show up when I run the powershell commands. LOTS of Microsoft software shows up though. It is not an MSI install. It is an EXE install. There is no uninstall key. I've attached a screen shot of the uninstall options. The "console mode" in the screen shot simply opens a GUI uninstall. -

Sccm 2012 - Assistance with software uninstall

ingram59 posted a topic in Configuration Manager 2012

There is an older application (Fishbowl 2013) running on over 100 machines. It was manually installed. We just recently moved to SCCM. It was an EXE install and there is NO uninstall value in the registry to reference. The uninstall uses "uninstall.exe", located in the "program files (x86)\Fishbowl" folder. There are no flags that will allow me to provide input to the uninstaller. I am able to do a removal on the local machine without incident. The vendor was of no help. What I want to do is use SCCM to uninstall this application so that we don't have to touch over 100 machines. I've tried creating a 'package' and an 'application', with a CMD file containing the full path to the uninstall command, but it doesn't work in either scenario. I don't want to 'install', the uninstaller, which is what both options want to do. I simply want to run the uninstaller 'hidden', so users get no prompts and so it completes automatically. I am not a powershell coder. I find it cryptic and confusing and am not able grasp it. Any help would be greatly appreciated. Dino