-

Posts

9260 -

Joined

-

Last visited

-

Days Won

371

Everything posted by anyweb

-

You can upgrade directly to 2503 it's cumulative. So it should contain all the fixes and updates from the previous version. do check out the official known issues here: https://learn.microsoft.com/en-us/intune/configmgr/core/plan-design/changes/whats-new-in-version-2503 and my take here: https://www.niallbrady.com/2025/04/04/configuration-manager-2503-is-out-350-bugs-fixed/

-

Windows Driver Foundation - CPU Utilisation High

anyweb replied to jackie_jack86's question in Windows 10

I would escalate this to your Dell Technical Account Manager, this is not an SCCM problem,unless of course your quote here: was when you imaged the Dell using your own task sequence, is that the case ? -

SCCM - Task Sequence (Softwares)

anyweb replied to jackie_jack86's topic in Configuration Manager 2012

-

well my CMG from the post above fixed itself over the period of 2 weeks after posting this blog post. I.e. it was a back-end fix from Microsoft and nothing that I did could change that. If you've made sure you have the latest updates/kb's applied to your 2503 environment and it's still showing this problem, try the fix mentioned above, if it helps great, if not hopefully microsoft will again fix it in the back end.

-

SCCM - SSRP - Http 503 Error

anyweb replied to jackie_jack86's topic in System Center Configuration Manager (Current Branch)

please see https://www.niallbrady.com/2020/09/15/fixing-an-evaluation-version-of-ssrs-with-http-error-503-the-service-is-unavailable/ -

Introduction Microsoft recently released a new feature for Windows 365 Cloud PC’s namely the ability to move one or more Cloud PCs to another region. This involves editing a previously created provisioning policy and then deciding whether you want to move all Cloud PC’s or only a subset targeted by that policy. All data/settings etc on the device (except for stored snapshots) will be retained with the move, which is great from an end user perspective. Microsoft themselves recommends testing this on a few Cloud PCs to review how the end to end process works for your users’ Cloud PCs. Why change location ? But first of all, why would we want to change the location of a Cloud PC ? Changing the location of a Windows 365 Cloud PC can be beneficial for several reasons: Improved Performance: Moving the Cloud PC to a location closer to the user can reduce latency and improve overall performance, making applications and services more responsive. Compliance and Data Sovereignty: Different regions have varying regulations regarding data storage and processing. Moving the Cloud PC to a location that complies with local laws can ensure adherence to data sovereignty requirements. Cost Optimization: Some regions may offer lower costs for cloud services. By relocating the Cloud PC to a more cost-effective region, businesses can reduce their operational expenses. Disaster Recovery and Redundancy: Relocating the Cloud PC to a different region can enhance disaster recovery plans by ensuring redundancy and availability in case of regional outages or disasters. User Experience: If a business has employees in different geographical locations, moving the Cloud PC closer to the majority of users can enhance their experience by providing faster access to resources. Scalability: Certain regions may offer better scalability options or more advanced infrastructure, allowing businesses to expand their operations more efficiently. Security: Some regions may have more robust security measures or offer specific compliance certifications that are important for certain industries. So let’s dig in and see how this works in reality. Moving one or more Cloud PC’s In the Intune console, locate the Windows 365 node and select Provisioning policies. Select the target provisioning policy, and click it to edit it. the policy details are revealed. Take note of the current location configured within the policy, this is optional but useful to know if you want to revert to that location later on. Review location before change At this point, pick a test Cloud PC to allow you to review that the move process goes smoothly. On that Cloud PC, verify the current location using a site such as https://www.whatismyipaddress.com or https://mylocation.org/. Below you can see the approximate location of our test Cloud PC before any move is attempted. As we can see it’s listed in Iowa, in the United States. 1. Inform the users Now that we know which provisioning policy we’ll be editing, and what location our target Cloud PC’s reside in, we need to pick one or more users of those Cloud PC’s and inform them of what is about to happen (and why). You need to explain to the users that they must save any open documents, close their apps and to sign out of their Cloud PC for the weekend. It’s a good idea to do the move over the weekend as obviously some Cloud PC’s will have more data/apps/settings to move than others. 2. Edit the policy In the General section of the provisioning policy, click Edit and modify the Geography. I chose European Union as that’s where I want to move my target Cloud PC’s. You can fine tune the region and select an actual region such as Sweden, but it’s recommended to choose the Automatic (Recommended) option to avoid provisioning failure. For a list of supported regions see here. As this is an actual move and not a provisioning, I chose Sweden. after editing the policy, click Next followed by Update. 3. Pick one or more target Cloud PC’s Now that you’ve changed the Geography and Region of your provisioning policy, click Apply this configuration to select one or more target Cloud PC’s. To select one or more devices, click the third option which is Region or Azure network connection for select devices and click Apply. That will bring up a list of devices to select. Select one or more targets and place a check in the Cloud PC’s will be disconnected and shutdown while the configuration is updating. Any unsaved work may be lost message. Note that the disconnect only affects selected devices, not all targeted by this provisioning policy. 4. Review the Cloud PC’s status The status of the selected Cloud PC’s will change to Moving region or network in the All Cloud PC’s section of the Windows 365 node. Individual Cloud PC’s will also show new status in Intune devices via the Overview and Device action status areas of each device. Also to note, the above statuses will have changed even before the move of the actual target takes place, so the Cloud PC may still be online for a brief period before it receives the instructions to shutdown. You can also monitor the status using the Cloud PC actions report. which reveals some more data for individual Cloud PC’s plus, if you selected multiple Cloud PC’s, click on Bulk batches. After some time, the status should update on the device to Completed and the Cloud PC actions report will be updated with the new status. And on the target PC itself, you can verify it’s location using the previously mentioned sites to confirm the new location. Job done! Final thoughts Keep in mind that after you’ve completed your Cloud PC move operations, that any new Cloud PC’s targeted by that provisioning policy will also be provisioned in the new region. This new ability is great, and I’ve tested it successfully in multiple tenants. I do however feel that with just a little bit more work it could be even better. What I’d like to see is native ability within Intune to send customizable emails/alerts/notification for any Cloud PC’s targeted by the move operation, both before and after the event to alert the end users about what is happening and when, and more importantly to let them know that operations are complete. Great job Microsoft!

-

Introduction In a previous blog post I started troubleshooting why my Windows app showed a blank white screen instead of the usual User Interface. I did a bunch of troubleshooting but it didn’t lead anywhere or so I thought. No matter what I did, I could not use the Windows app any more. So I kept digging. By checking the Details section of Task Manager, I could see where the Windows365.exe executable file was launched from. C:\Program Files\WindowsApps\MicrosoftCorporationII.Windows365_2.0.366.0_arm64__8wekyb3d8bbwe I browsed the files and folders in there and found an interesting to me json file called system_settings.json in the Resources folder, it referenced the Microsoft remote desktop client agent (msrdc) and a quick search on the internet revealed that that version was not the latest. So I downloaded the latest version and installed it. I then re-attempted to start the Windows app, but to no avail, it was still blank. The MSRDC agent I’ve been using the Windows app since it’s first iteration, so it was easy to forget about msrdc. Launching that Microsoft Remote Desktop Client agent allowed me to see and use my Cloud PC’s so that was at least a workaround for accessing them until the problem was resolved. Back to the problem though. Looking through event viewer I could see lots of errors in AppXDeployment-Server, maybe they had something to do with my problem. That error was: Deployment Register operation with target volume 😄 on Package MicrosoftCorporationII.Windows365_2.0.365.0_arm64__8wekyb3d8bbwe from: (AppXManifest.xml) failed with error 0x80073D02. See http://go.microsoft.com/fwlink/?LinkId=235160 for help diagnosing app deployment issues. I followed the link and it revealed the following for that error code. Webview2 revisited This ‘currently in use’ error got me thinking again about the Outlook New Webview2 problem, which I never managed to solve in part 1. But first, what is Webview2 ? see here. WebView2 is a way for app developers to embed web content (such as HTML, JavaScript, and CSS) in Windows applications. By including the WebView2 control in an app, a developer can write code for a website or web app, and then reuse that web code in their Windows application, saving time and effort. See Introduction to Microsoft Edge WebView2. So what was the Outlook New problem ? Namely every time I started Outlook New, it would prompt me to install Webview2. As you can see in the previous part I tried to fix that and thought that I had, but in reality, the problem was still there and Outlook New always complained about the Webview2 requirement. Could the Webview2 installer be stuck in a loop somehow and that could be affecting the Windows app from updating/deploying properly ? When I tried to solve it in part 1, I launched Outlook New from an administrative command prompt, or chose “run as administrator” after elevating myself. However the solution we use for elevation only elevates processes such as cmd.exe or what you click on. It seems that the Webview2 installer, ignores the permissions that Outlook New is launched from. Eureka This became clear when I forgot my phone at home, and thus was not able to enter the MFA code to elevate a process in my continued troubleshooting., instead, I used ControlUp to elevate my session. I then logged off, and logged on to Windows again, and tested my elevation, I was elevated. The difference here is my Windows session was elevated, not just a process. I then launched Outlook New as administrator, got the Webview2 popup, clicked OK and …. some minutes later, Outlook opened, for the first time in days! Clearly, Webview2 was now installed. I then launched the Windows app using ‘run as administrator’ and it too, launched, successfully ! Wonderful ! So at this point I’m fairly confident that Webview2 was the problem here, or if both the Windows app and Outlook New for some reason needed the Windows session elevated in order to complete pending Webview2 update actions. I’m also very happy that my Windows app is once again working on my Qualcomm ARM64 laptop ! If you are wondering whether Webview2 is even used for the Windows App, open task manager, search for View and launch the Windows app. Look what appears. I’ve placed red rectangles around three things, Outlook, Windows 365 and MS Teams. Why the other apps ? during my the last week or so I also had serious lagging issues in Teams, and it too, uses Webview2 as you can clearly see in the image above. Fixing the Webview2 installer in Outlook (New) via an elevated Windows session solved all my problems in the following apps: Outlook (New) Teams Windows App Update I’ve since had the exact same problem occur another 3 times and I’m getting much better at fixing it, especially after reading this. In a nutshell elevate your Windows session (as described above), then delete the following registry key: HKLM\SOFTWARE\WOW6432Node\Microsoft\EdgeUpdate\Clients\{F3017226-FE2A-4295-8BDF-00C3A9A7E4C5} Once done, run the Edge troubleshooter here. This will successfully download and reinstall Webview2 and life will be bearable again, for a while. I’m not sure what app or process breaking my Webview2 but I can tell you this much, it’s very very annoying.

-

Introduction I use my Windows app daily from a variety of devices to access my Cloud PC’s. Today like any day I clicked the Windows app icon but this time, nothing happened, the familiar Windows app did not appear. So I clicked it again and this time it did appear but was blank. Note: This problem occurred only on my ARM64 device, the Windows app worked just fine on other X64 devices. As you can see in the screenshot above, it’s completely blank, empty of any content, so it’s impossible to use. Trying to fix it I thought the app somehow became corrupt, so I tried to reset & repair the app. Neither of these options helped. Next, I tried to end the processes for the Windows in task manager. And killed the Windows365.exe process tree… but these didn’t help either. The app simply refused to display it’s content. While troubleshooting I noticed a Windows cumulative update was ready to install, you can see it below with the blank Windows app in-front. so I let Windows update do it’s thing and manually restarted the computer. It didn’t help. What about the logs ? Next I looked at the logs connected to the Windows app in %temp%\DiagOutputDir\Windows365\Logs as documented here. The logs (4 in total) did not give me anything to go on other than to highlight that I did have a problem. The most interesting log snippet was this” [2025-03-21 12:53:49.547] [async_logger] [error] [MSAL:0003] ERROR ErrorInternalImpl:134 Created an error: 8xg43, StatusInternal::InteractionRequired, InternalEvent::None, Error Code 0, Context ‘Could not find an account. Both local account ID and legacy MacOS user ID are not present’ but I saw that was repeated even when my Windows app was working, so I chose to ignore it. So what else could I do at this point ? uninstall the app. I then reinstalled the app from the store. end result, same problem! At this point I reverted back to the logs, and found this section, it reminded me of an error I saw this week when launching Outlook (New). The Outlook (New) webview2 error is also shown on top of the log file below: I never bothered to install that Webview2 component as it requires local administrative permissions to do so and I’m a standard user (as I should be). So I elevated my session and tried clicking ok to the Outlook (New) message. That didn’t help, it just kept looping through a prompt asking me to install the new Webview2. A google search led me to this post, and I downloaded the Webview2 client (for ARM64) from here. After deleting the reg key and installing the Webview2 update & restarting the computer I still had the issue with Outlook New prompting to install Webview2 and my Windows app not working. Next up I checked the version of Windows app I was running and it was the latest, 2.0.365.0(ok there’s a insider preview version that’s newer but… I don’t think that’ll help). At this point I’m out of ideas and will inform the Microsoft Group in charge of Windows 365 to see what they have to say about it, I’ll revert in part 2 once this is hopefully solved cheers niall

-

the last error in your provided log is "There are no task sequences available to this computer.. Please ensure you have at least one task sequence deployed to this computer. Unspecified error (Error: 80004005; Source: Windows" so.. make sure that whatever you are pxe booting actually is in a collection that is targeted by a task sequence

-

i've messaged you

-

I asked copilot and it gave me this response, try it !, if it works then you can just apply the registry key via a step in the task sequence just before it normally shows up Open Registry Editor: Press Win + R, type regedit, and press Enter. Navigate to the following path: HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows\CurrentVersion\OOBE Create a new DWORD (32-bit) Value: Name it SkipMachineOOBE. Set its value to 1. Create another DWORD (32-bit) Value: Name it SkipUserOOBE. Set its value to 1. These changes should bypass the "Just a moment" screen during the OSD process.

-

vsphere pxe boot virtual machine in SCCM

anyweb replied to jackie_jack86's topic in Configuration Manager 2012

I'd suggest you talk to your network team. -

vsphere pxe boot virtual machine in SCCM

anyweb replied to jackie_jack86's topic in Configuration Manager 2012

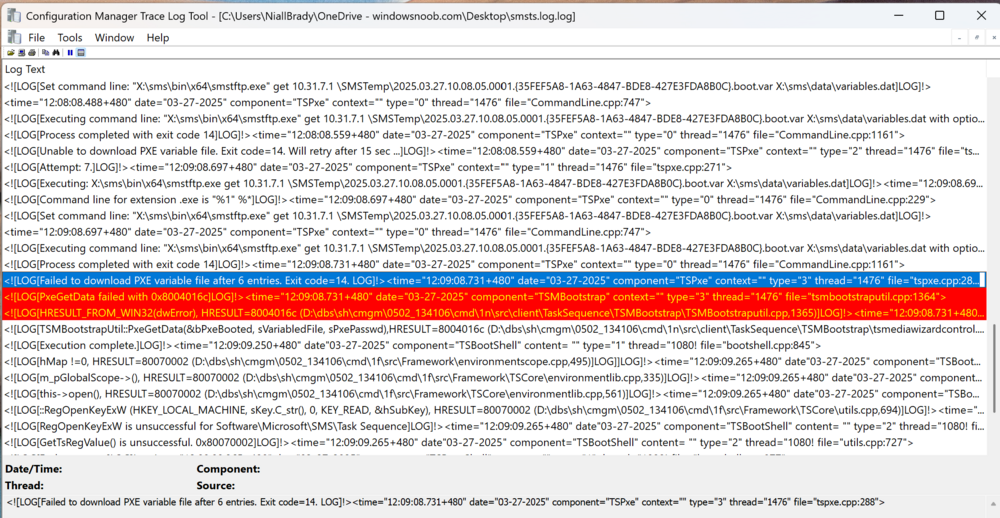

here's the error in CMTrace, you really should use that for reviewing any SCCM log as it highlights warnings and errors The text of interest is: Failed to download PXE variable file after 6 entries. Exit code=14. A quick google reveals that this could be because your VMware client is on a different subnet to that hosted by your PXE enabled distribution point, or that your router is handling subnets in an incorrect way. -

vsphere pxe boot virtual machine in SCCM

anyweb replied to jackie_jack86's topic in Configuration Manager 2012

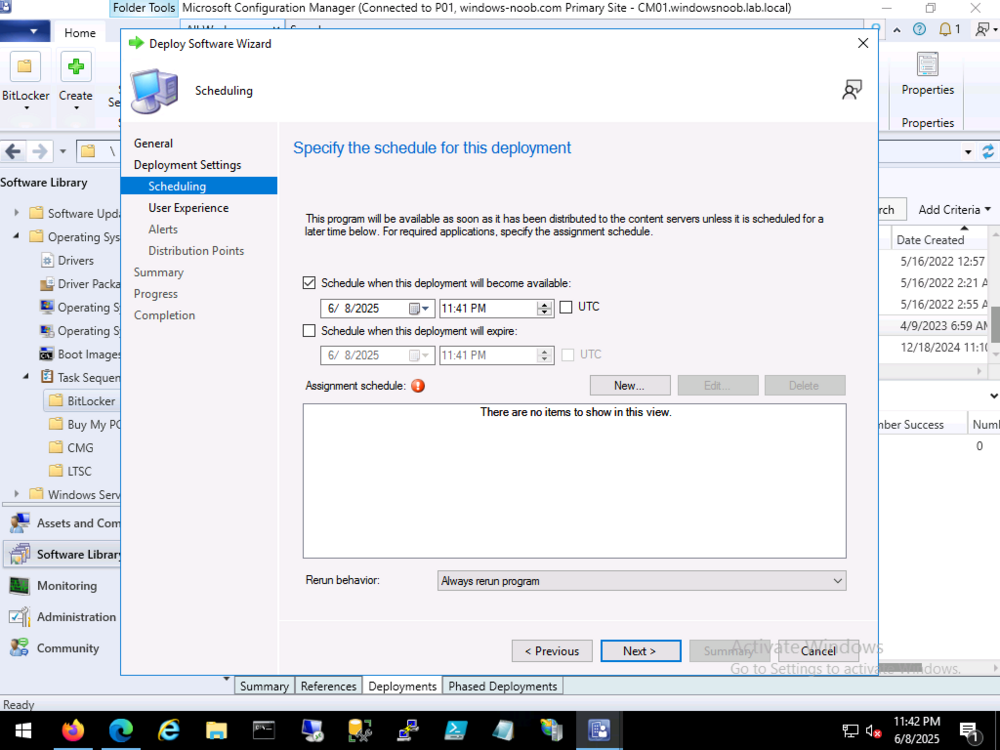

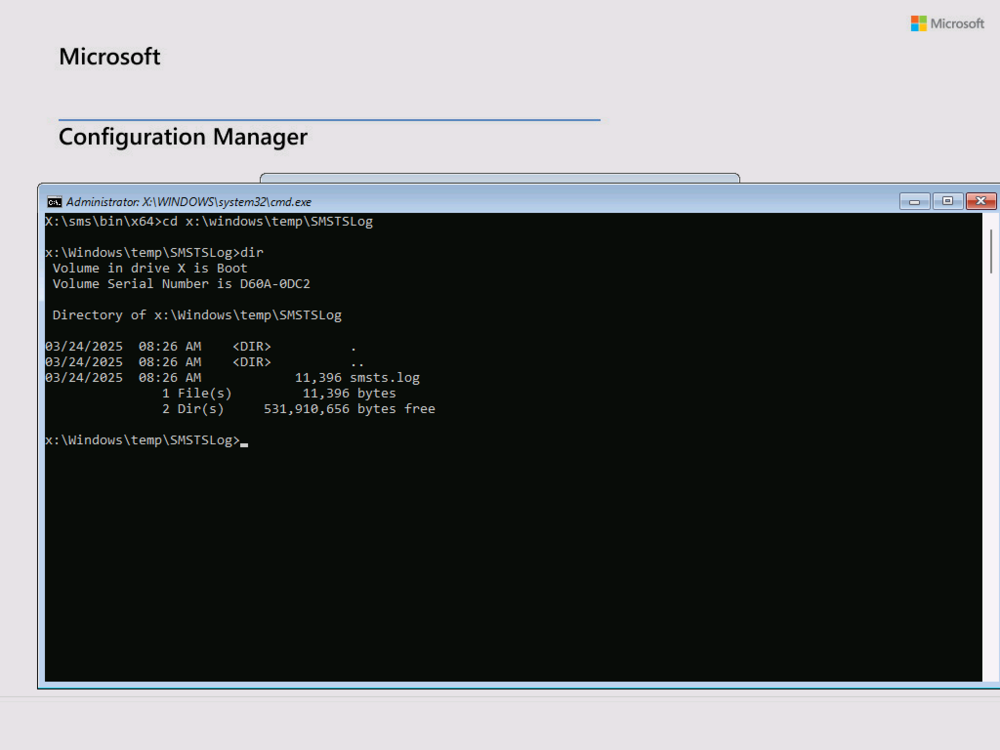

you need to press F8 as soon as you the Configuration Manager wallpaper (see my screenshot below).... (also make sure command prompt/f8 support is enabled on the boot image) and grab the smsts.log in X:\Windows\Temp\SMSTSLog, in there you'll probably find out that the reason it's restarting is because of missing drivers or no task sequence advertised to these devices. you can post the log here once you have it, you can copy it off the machine using a usb key or map a network share using net use -

script to push CMtrace

anyweb replied to frank offei's topic in System Center Configuration Manager (Current Branch)

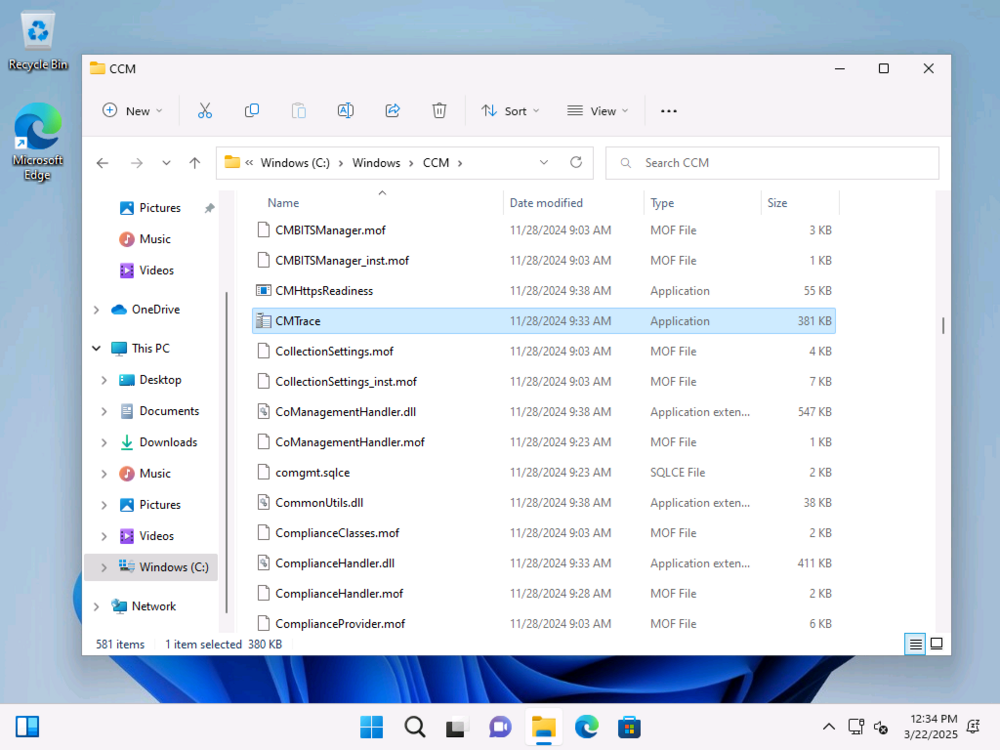

I guess you were trying to ask a question, but in case you didn't know CMTrace.exe is already in the C:\Windows\CCM folder see here so as long as you have the ConfigMgr client installed, you'll also have CMTrace. -

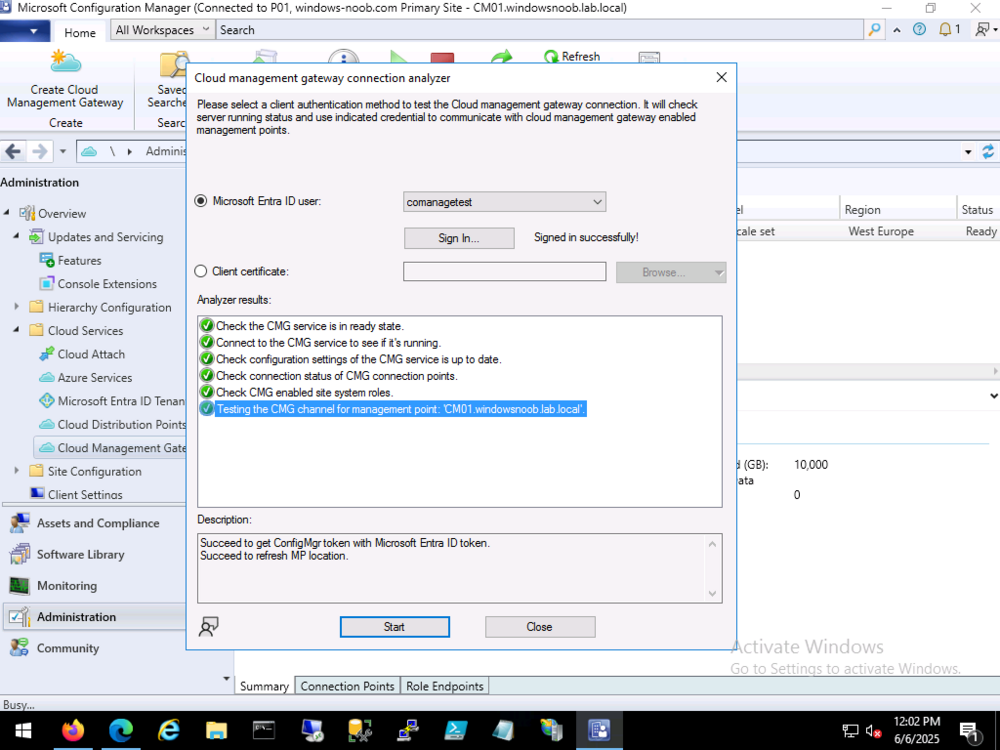

Introduction Last month I posted about my CMG (Cloud Management Gateway) going AWOL (absent without leave) and staying broken, this was in response to a tweet from Panu, and I documented the sorry story here. I tried many things including the hotfix that was available at the time of posting, but nothing helped. My CMG remained broken and stayed in a state of disconnected. I had planned on removing it entirely and recreating the CMG but time got the better of me. Today however I got a notification from Linkedin that someone had responded to one of my posts about that problem so I took a look. Steven mentioned a hotfix rollup (HFRU) and it was a new one. https://learn.microsoft.com/en-us/mem/configmgr/hotfix/2409/30385346 The issues fixed with this hotfix rollup are pasted from that article below, with the important CMG bits highlighted in bold italic: Issues that are fixed Internet based clients using the alternate content provider are unable to download content from a cloud management gateway or cloud distribution point. Deployment or auto upgrade of cloud management gateways can fail due to an incorrect content download link. Internet based clients can fail to communicate with a management point. The failure happens if the SMS Agent Host service (ccmexec.exe) on the management point terminates unexpectedly. Errors similar to the following are recorded in the LocationServices.log file on the clients. Console [CCMHTTP] ERROR INFO: StatusCode=500 StatusText=CMGConnector_InternalServerError The Configuration Manager console displays an exception when you check the properties of a Machine Orchestration Group (MOG). Membership of the MOG can’t be modified; it must be deleted and recreated. The exception happens when the only computer added to a MOG doesn’t have the Configuration Manager client installed. Hardware inventory collection on a client gets stuck in a loop if the SMS_Processor WMI class is enabled, and the processor has more than 128 logical processors per core. If a maintenance window is configured with Offset (days) value, it will fail to run on clients if the run date happens on the next month. Errors similar to the following are recorded in the UpdatesDeployment.log file. Console Failed to populate Servicewindow instance {GUID}, error 0x80070057 The spCleanupSideTable stored procedure fails to run and generates exceptions on Configuration Manager sites using SQL Server 2019 when recent SQL cumulative updates are applied. The dbo.Logs table contains the following error. Console "ERROR 6522, Level 16, State 1, Procedure spCleanupSideTable, Line 0, Message: A .NET Framework error occurred during execution of user-defined routine or aggregate "spCleanupSideTable": System.FormatException: Input string was not in a correct format. System.FormatException: at System.Number.StringToNumber(String str, NumberStyles options, NumberBuffer& number, NumberFormatInfo info, Boolean parseDecimal) at System.Number.ParseInt64(String value, NumberStyles options, NumberFormatInfo numfmt) at Microsoft.SystemsManagementServer.SQLCLR.ChangeTracking.CleanupSideTable(String tableToClean, SqlInt64& rowsDeleted) ." Multiple URLs are updated to handle a back-end change to the content delivery network used for downloading Configuration Manager components and updates. The Configuration Manager console can terminate unexpectedly if a dialog contains the search field. This gave me some hope so I powered up that lab and took a look. As you can see the hotfix rollup is ready to install. Note: Yes I’m aware one of my app secrets is about to expire, that’s not part of this blog post or problem so I’ll ignore it for now. Before installing the hotfix rollup however I checked on the status of my CMG. And to my great surprise after weeks (a month even) of being broken and disconnected it was now………. connected. Uh… what ? Ok, that’s weird. I ran the connection analyzer as well just for giggles, and for the first time in a long time it passed with flying colours. As the server log files have unfortunately rolled over, I cannot see ‘when’ it self-fixed itself or whether that was on the backend (the CMG in Azure) or on the CM server itself. Just as a reminder, my now working CMG is in this state without me doing anything further after my original blog post, so it self fixed itself after many weeks of being broken. Installing the hotfix rollup To wrap things up, I decided I’ll install the hotfix rollup, to see what if anything it can do. And after some time it was done, however as you can see I still have 2 Configuration Manager 2409’s listed (one was the early ring upgrade). Well that’s it for this blog post, thanks Steven for the heads up on the hotfix rollup, however it didn’t resolve my issue, which seemed to solve itself prior to the rollup.